The Tensormesh Blog

Deep dives into distributed AI compute, GPU infrastructure, and enterprise ML engineering.

Tensormesh Raises $5.2M Seed Round to Scale Distributed AI Compute

We are thrilled to announce our $5.2M seed round led by Laude Ventures to accelerate the buildout of our distributed GPU infrastructure platform for enterprise ML teams.

Read more →

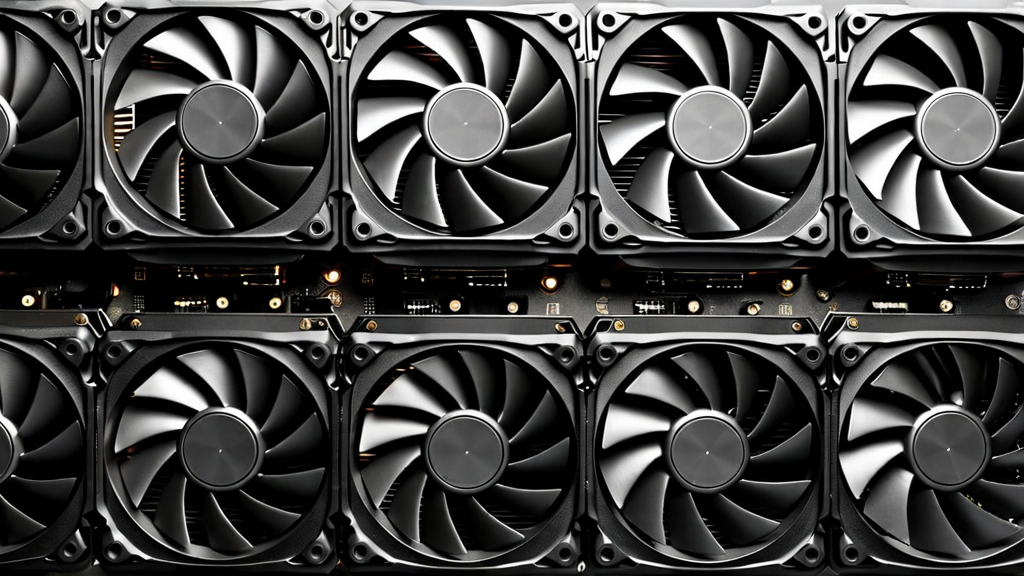

Building High-Performance GPU Clusters for Enterprise ML Training

A practical guide to designing, networking, and operating multi-node GPU clusters optimized for large-scale deep learning workloads.

Read more →

Optimizing LLM Inference at Scale: Techniques and Best Practices

Quantization, KV caching, speculative decoding, and continuous batching — a deep dive into production LLM serving optimization.

Read more →

Distributed Training Strategies for Large Language Models

From data parallelism to 3D parallelism — how modern LLM training splits computation across hundreds of GPUs efficiently.

Read more →

The Economics of AI Compute: On-Premise vs Cloud vs Hybrid

How to model total cost of ownership for AI compute across different deployment models — and when each strategy makes financial sense.

Read more →

Tensor Parallelism Explained: How Modern AI Splits Computation

A technical walkthrough of tensor parallelism — the key technique that allows training models too large to fit on a single GPU.

Read more →

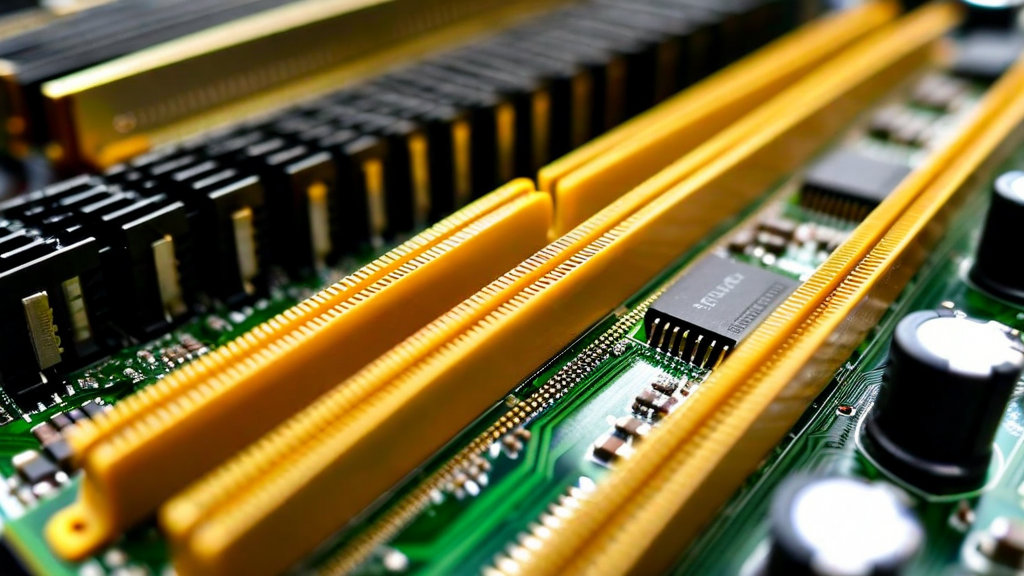

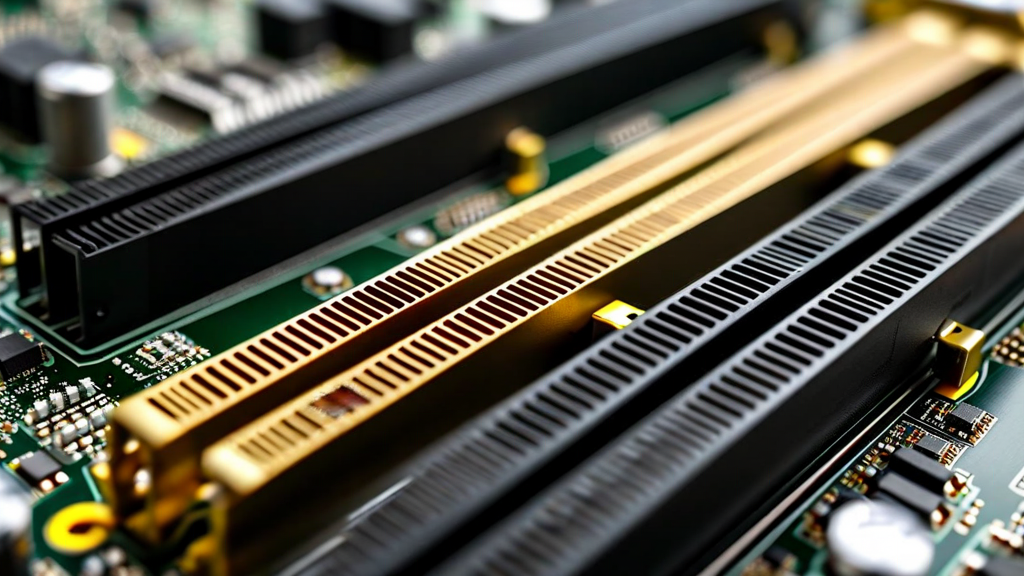

GPU Memory Management for Deep Learning Workloads

Understanding GPU memory hierarchies, activation checkpointing, gradient accumulation, and mixed-precision training to maximize memory efficiency.

Read more →

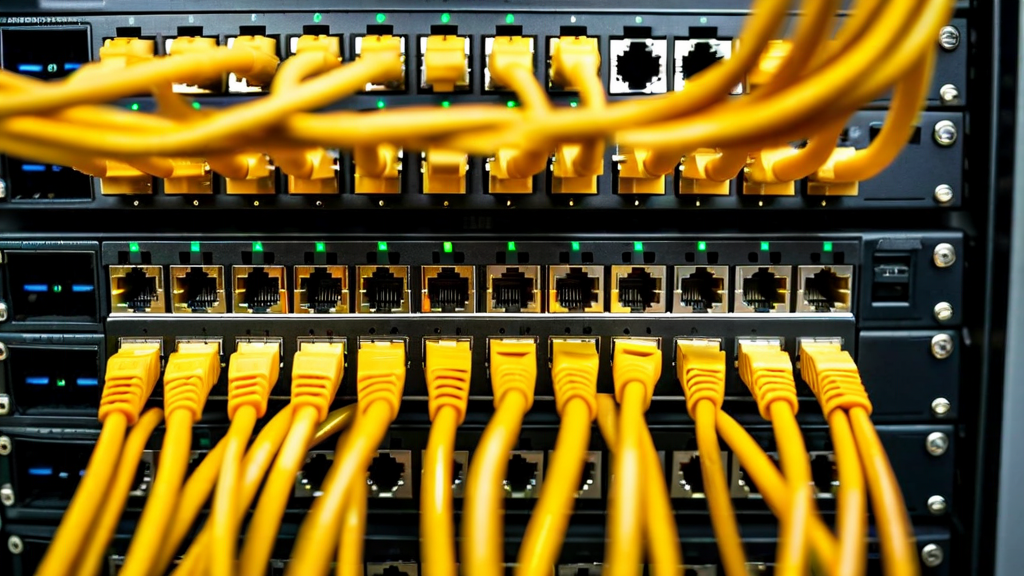

Networking Infrastructure for High-Performance AI Clusters

InfiniBand, RoCE, and high-speed Ethernet — how network topology and protocol choices affect distributed training performance.

Read more →

Monitoring and Observability in Distributed ML Systems

Building comprehensive observability for distributed training runs — metrics, tracing, alerting, and failure diagnosis at scale.

Read more →

From Research to Production: Deploying ML Models at Scale

The engineering challenges of moving a research prototype to a production serving system — pipelines, serving frameworks, and SLA management.

Read more →

The Future of AI Compute: Trends Shaping Enterprise ML Infrastructure

An analysis of the forces reshaping enterprise AI compute — custom silicon, disaggregated memory, and the convergence of training and inference.

Read more →